Overview

Analytics By Design (ABD in short) is a new methodology that GovTech is piloting to improve the analytics-readiness of projects. A project that is analytics-ready will allow Data Scientists, Analysts and even business teams to quickly get down to analysis and derive insights faster. Conversely, a project that is not analytics-ready will encounter issues on data extraction, or risk having dirty and incomplete data derail the analysis.

It can be applied broadly, be it building products & platforms, running programmes, or drafting policies. It helps you become more data-driven in your work, and makes the data work for you by uncovering actionable insights to improve your project’s prospects for success from the start.

At the heart of it, Analytics By Design is a philosophy of how we should handle the collection and generation of data. When the philosophy is embraced, project teams and their leaders make the effort to strategize and meticulously plan out what data fields to collect or generate before the project starts or launches. This lays a strong foundation for the project in terms of analytics-readiness, and even if the analytics work happens weeks or months downstream after your project has started, it helps to avoid a scramble to extract, clean and prepare the data.

Why Adopt Analytics By Design?

The ABD methodology came about as a counter to the lack of forward-planning and emphasis on how to collect, generate and use data for downstream analytics. Data is no longer just used for transactions in products & platforms; data must not only keep the systems running but also be primed for analytics work.

Underpinning the Analytics By Design methodology is a set of values to define our behaviours and actions when embarking on a new project. We have been saying that data is an important asset but many have failed to act and behave in a way that matches those aspirations. Embracing these values will help everyone approach their projects in a way that ultimately boosts their analytics-readiness.

To that end, these are the values that Analytics By Design promulgates:

-

Data is a planned output, not a by-product. Data that is ready for analytics often comes about through deliberate planning. Don’t leave this to chance and hope that your data will be useful for analytics. Data generated or collected is generally used to facilitate transactions, operations and logistics planning. Don’t assume that your data will be useful for analytics just because your project works. Value from data is derived when it eventually powers the actions, not when it is accumulated

-

Analytics is a core feature, not a good to have. More often than not, analytics is thought of and executed after the project is launched. When building a product, an analytics module might be the last feature to be built, or is postponed if the project needs to launch. After running an event, analysis happens afterthought, only to realise the survey questions or registration details don’t tell you what you want to know. We need to bring analytics back as a core component of running a project.

-

Data-driven is a shared goal, not a problem to be solved alone. The ability to be data-driven at our projects requires everyone - leaders and project members, business teams and tech teams - to work for it. It is a pipeline of responsibility from planning how the data will be used, to generating and collecting it and then analysing it for insights. Leaders must demand for such a workflow, and support the teams to make it happen. Likewise, the teams must see the value and prioritise analytics alongside other project needs. The success of analytics leading to impactful outcomes cannot be seen as solely a business problem, or a tech problem. Both parties must come together to work out business actions and questions, and have the underlying tech support for data collection and generation.

At the end of the day, ground realities can be challenging as project teams grapple with tight deadlines, limited bandwidth, and shifting demands. This set of values will act as a compass to guide you back into ensuring that your projects are kept analytics-ready always, downstream.

How to Implement It?

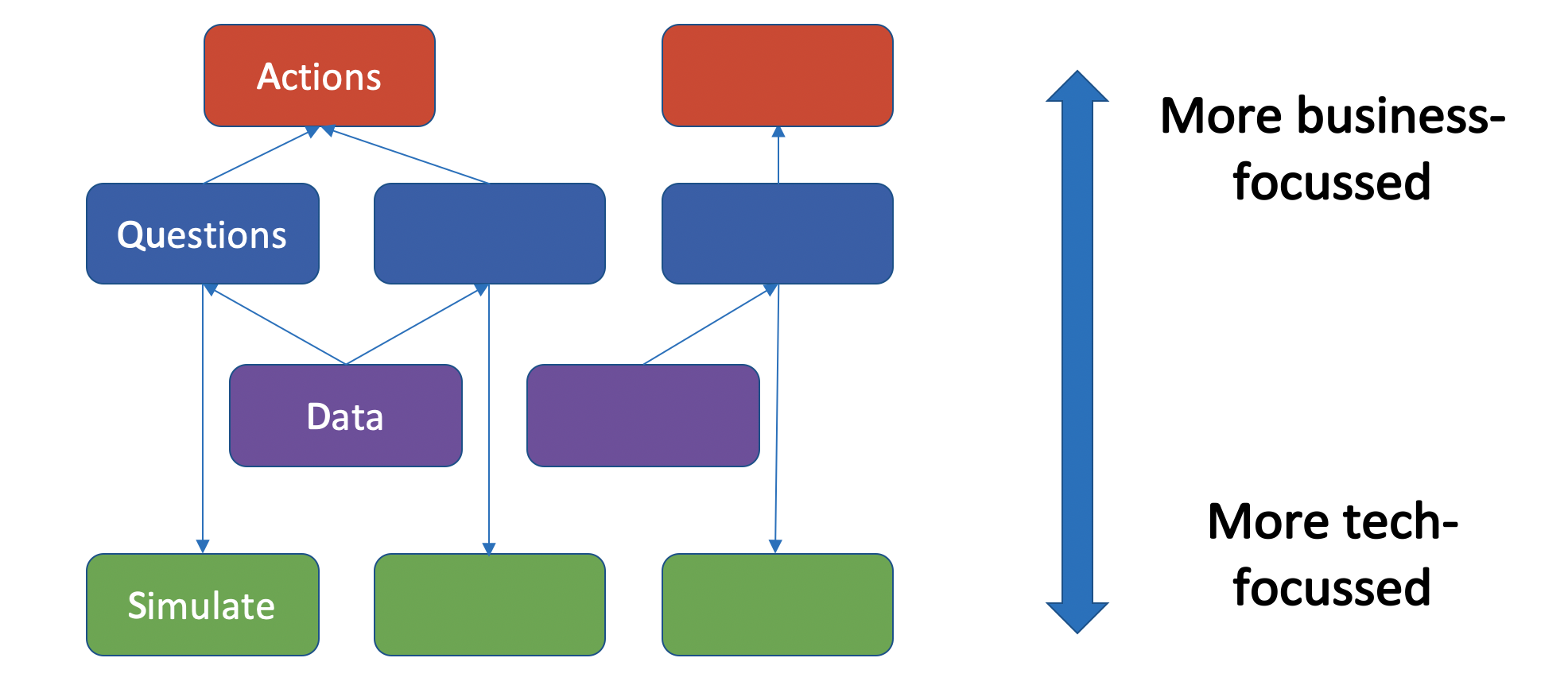

The way to implement this is to achieve a strong synergy between the actions we take (Action), the questions we ask to guide our decisions (Question), and the data we collect to validate them (Data). The synergy is verified by charts, dashboards or scripts on mock data before the project begins (Simulate). This is the QuADS framework.

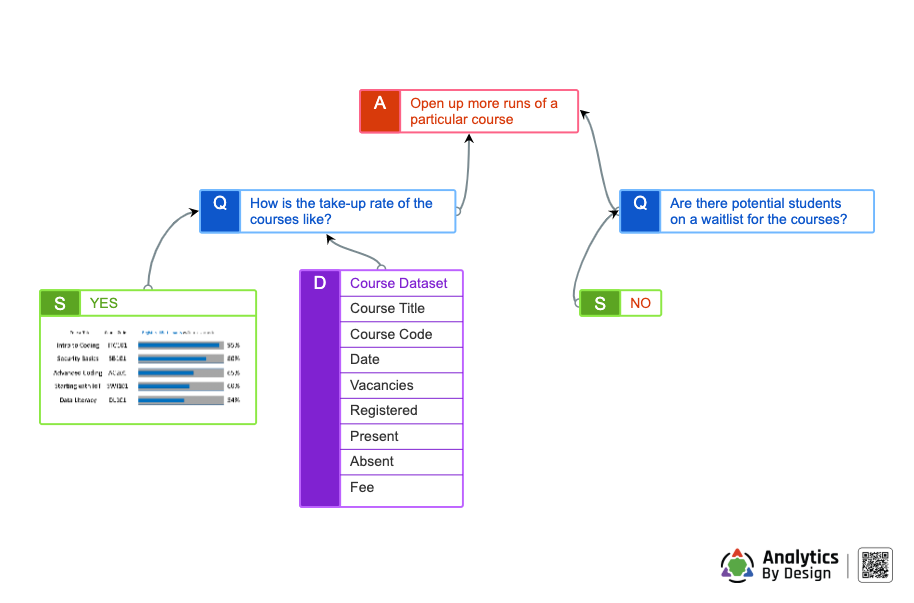

When embarking on a new project, the project team can get together to draw up a QuADS diagram, which organises the Actions, Questions and Data into a clear mind map of sorts. This diagram allows the team to quickly identify what areas they can act with data, what pertinent questions need to be answered, and what data they need to achieve the intended level of insights.

QuADS Framework

In a nutshell, the QuADS framework help you achieve synergy between your Actions, Questions, Data through a process of Simulation. None of these components can stand alone by itself. The stronger the Question-Action-Data synergy is, the higher the analytics-readiness of your project.

Here is how you should apply the QuADS framework:

-

Question - Ask plenty of questions about your project and record them down. It is important to bring the whole project team to the table and carry this out. Different members of the team will have different questions about the project, especially the part that they were most involved in. If it helps, imagine that the project has already been going on for a few months. What burning questions will you have to help you monitor, enhance or correct a particular aspect of the project?

-

Action - Craft the Actions you will take in running your project. It can be a decision you need to make, and/or an action you need to take. Tagging the questions to the related actions helps you prioritise which are the important ones. You may have a long list of questions, but not all of them lead to an action you care about (or know about at that point in time). For example, if you’re building an app that will only be used by a small team of 50 people, a question like “how does my app perform when 1,000 people are using it” is a perfectly valid, but is not an immediately useful question which may lead to an action you need to take. Questions not ultimately tagged to an action may indicate that it does not need to be answered yet.

-

Data - List down the datasets and their data fields that you will generate or collect for your project. They can be generated from the system or manually curated and maintained digital files, but the understanding must be that these are readily obtainable, and not merely a wishlist. If the data comes from an IT system, you should not simply replicate the actual normalised tables (often going into the hundreds) which will make discussions about the QuADS diagram a lot more complicated. Instead you should imagine how the dataset suitable for analytics will look like, and the tech teams should take note and confirm that exporting such a dataset is doable. Aim for as much details here as is necessary for discussions, but take note not to turn this into a technical data modelling diagram.

-

Simulate - Finally, carry out a reasonable simulation to ensure that the Data can answer the Questions. Some require little effort - if the question is about figuring out the average scores of students in their Maths test, and your dataset clearly has the fields ‘score’ and ‘subject’, then very little to no simulation is required. For those that are more complex, or perhaps you are unsure, you should carry out the process of simulating that the question can be answered through charts & dashboards, writing some scripts or other means. This process may require you to mock up some data according to your defined data fields. After the simulation, indicate whether a Question can be reasonably answered with a “Yes” along with any supporting charts, or a “No” when the question cannot be answered.

With the completed QuADS diagram, the project team and their leaders can study it and understand how analytics-ready this project will be when it launches.

It is extremely important to note that not every question needs to be answered. Some questions may not even have an answer, or answering that question may be extremely difficult or so technically challenging that it is not really worth the effort. That is ok.

However, if there are some important questions and being able to answer them affects a very important Action to take, then the team should take the necessary steps to ensure that the data is properly generated or collected. Sometimes this involves going back to the drawing board; it can be as simple as modifying a registration form for an event to capture the data you need, or as complex as modifying your technical design for an app.

Ultimately, the team and management will need to make a joint call on when to go ahead even with some potential gaps downstream. This is why analytics-readiness is a shared responsibility.

Principles

We foresee that frameworks will evolve to better implement the Analytics By Design methodology. Some organisations may adopt the QuADS framework as-is. Some may adapt it into a framework that integrates better into their workflow and culture. Others may even create a completely new framework that works better for them. Regardless, these are a set of principles that will help guide the implementation of the Analytics By Design methodology:

- Plan for analytics upstream before the project begins with the ability to export data out effectively.

- Frame questions that help you as the project owner better understand the project

- Connect the questions to the actions you will consciously take, or decisions you will need to make in your project

- Design the data collection or generation to be able to answer those questions, prioritising questions that lead to an action.

- Achieve synergy between the Actions, Questions and Data through simulating the analytics process

Signature Tools

To help project teams implement the Analytics By Design methodology, we are considering to develop and roll out 2 signature tools.

- QuADS Designer (Project Deerling): An interactive web-based tool that allows project teams to quickly frame up the Questions, Actions and Data relevant to the project into a QuADS sheet. With these created QuADS diagrams, the teams can use them to sync up on expectations about analytics-readiness with management.

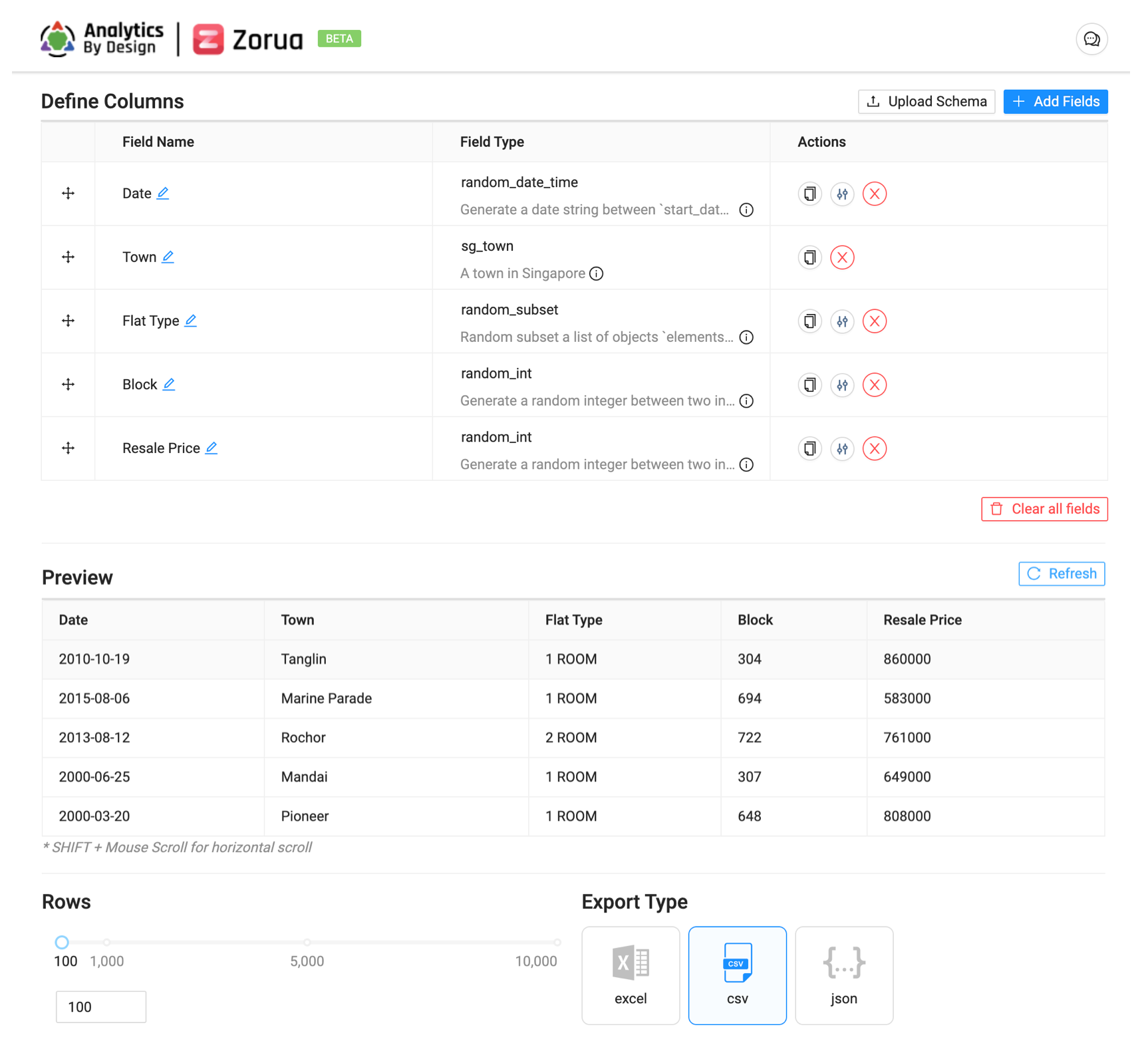

- Mock Data Designer: An interactive web-based tool that allows project teams to quickly generate huge datasets to simulate how they planned for their project to generate/collect data. With the mock-up data, teams can quickly simulate if there is synergy between the Question, Action, and Data. This tool can also help the teams have a preview on the data schema required for the downstream analytics work.

We Would Love to Hear from You

If you have any feedback on the Analytics By Design methodology, as well as interest in these Signature Tools, please head over to https://go.gov.sg/abd-feedback to leave us your comments.

You can also join in the Analytics By Design LinkedIn group.

Last updated 23 September 2025

Thanks for letting us know that this page is useful for you!

If you've got a moment, please tell us what we did right so that we can do more of it.

Did this page help you? - No

Thanks for letting us know that this page still needs work to be done.

If you've got a moment, please tell us how we can make this page better.